Lecture 5-1

Statistical Analysis

of Urban Data

Network Science Institute | Northeastern University

NETS 7370 Computational Urban Science

2026-02-09

Welcome!

This week:

Statistical Analysis of Urban Data

Aims

- Understand the unique features of spatial data and how they impact statistical analysis.

- Learn techniques to measure and account for spatial autocorrelation and nonstationarity in urban data.

- Explore spatial clustering techniques in spatial data.

Spatial data in CUS

Computational Urban Science is primarily concerned with spatially embedded features of cities.

Some features are explicitly spatial: commuting and infrastructure networks, physical amenity visitation.

Some features are influenced by space: Social, communication, employment / opportunity networks.

- Remember from last week’s practical: higher likelihood of within-state friendships.

Spatial data is unique

The spatial structure of urban data requires special consideration.

Consider Tobler’s first “law” of Geography: “Everything is related to everything else, but near things are more related than distant.”

In CUS, we want reliable, repeatable insights about urban systems. If everything is related to everything else:

How can we achieve reliable statistical estimates of the relationship between urban variables?

How can we measure causal relationships in spatially-interconnected systems?

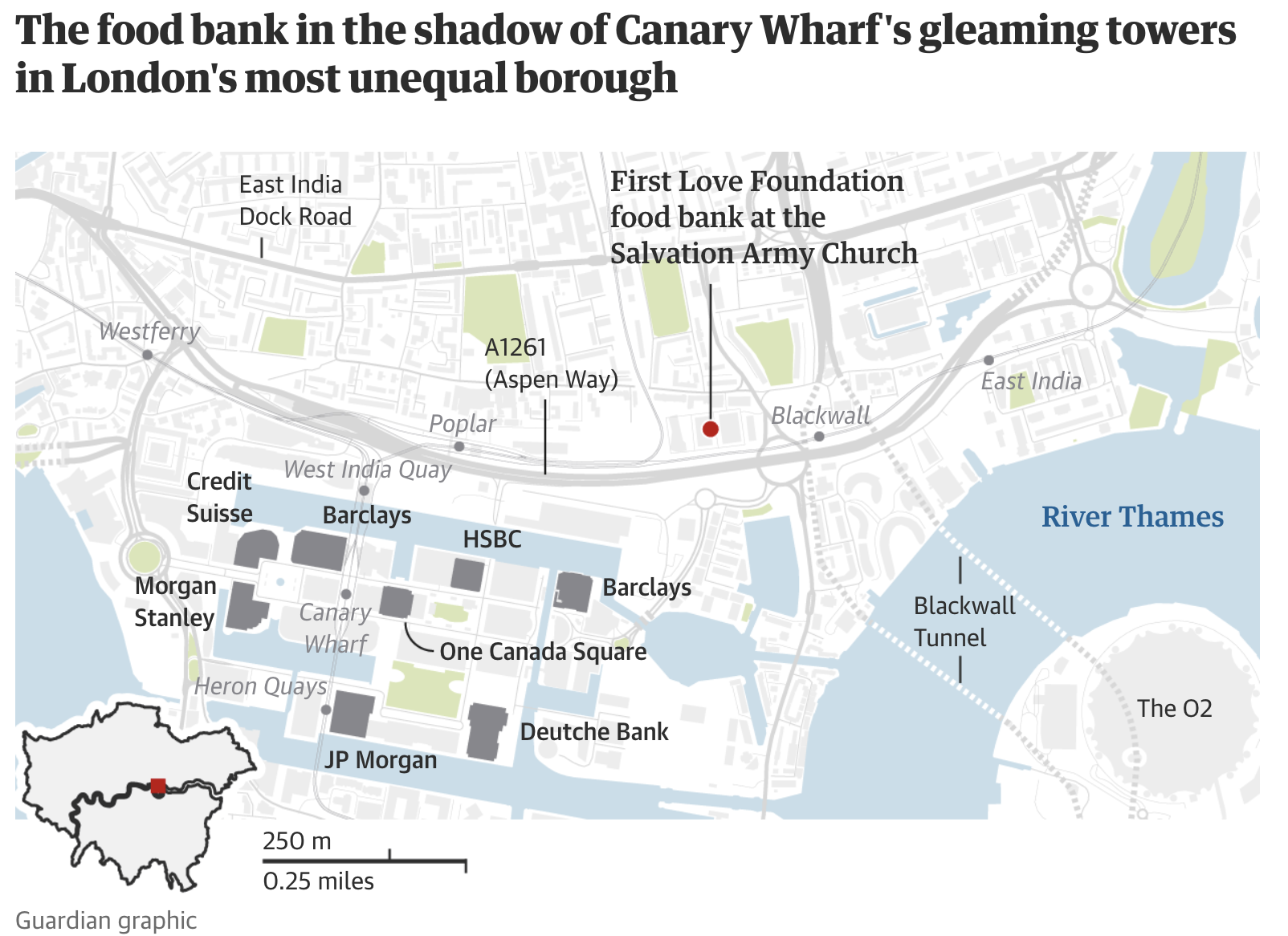

Key takeaway: proximity and adjacency

In Week 2, we discussed the Modifiable Areal Unit Problem (MAUP) and the effect of scale for defining analytical conclusions.

Today, we will use many definitions of proximity and adjacency as we aim to encode the spatial structure of our data into our analysis.

How do we define which things are “near” one another as described in Tobler’s law? Euclidean distance? Geodesic distance? Travel time? Semantic distance?

How do we define adjacency? \(k\) nearest-neighbors? What is \(k\)? What about physical boundaries between physically adjacent features?

Like MAUP, appropriate definitions of spatial structure in your data require your own scientific judgment.

Key takeaway: proximity and adjacency

A tale of two cities: London’s rich and poor in Tower Hamlets

Key takeaway: proximity and adjacency

A tale of two cities: London’s rich and poor in Tower Hamlets

Empirical regularities in spatial data

Is Tobler’s First Law a Law? I prefer “empirical regularities”.

Spatial features have consistent, repeated patterns which should inform how you address statistical and causal inference and other analyses of spatial data.

Some of these regularities are:

Spatial autocorrelation (a.k.a. “clustering” or spatial heterogenity)

Spatial nonstationarity (variation of statistical relationships across space)

Physical constraints on network structure

Spatial autocorrelation

Tobler’s first law revisited: “…near things are more related than distant.”

This is an empirical observation which holds true for a wide range of spatial phenomena.

Spatial autocorrelation permits:

- Prediction / interpolation based on physical proximity.

Spatial autocorrelation hinders:

- Statistical inference (independence assumptions are violated for most spatial data)

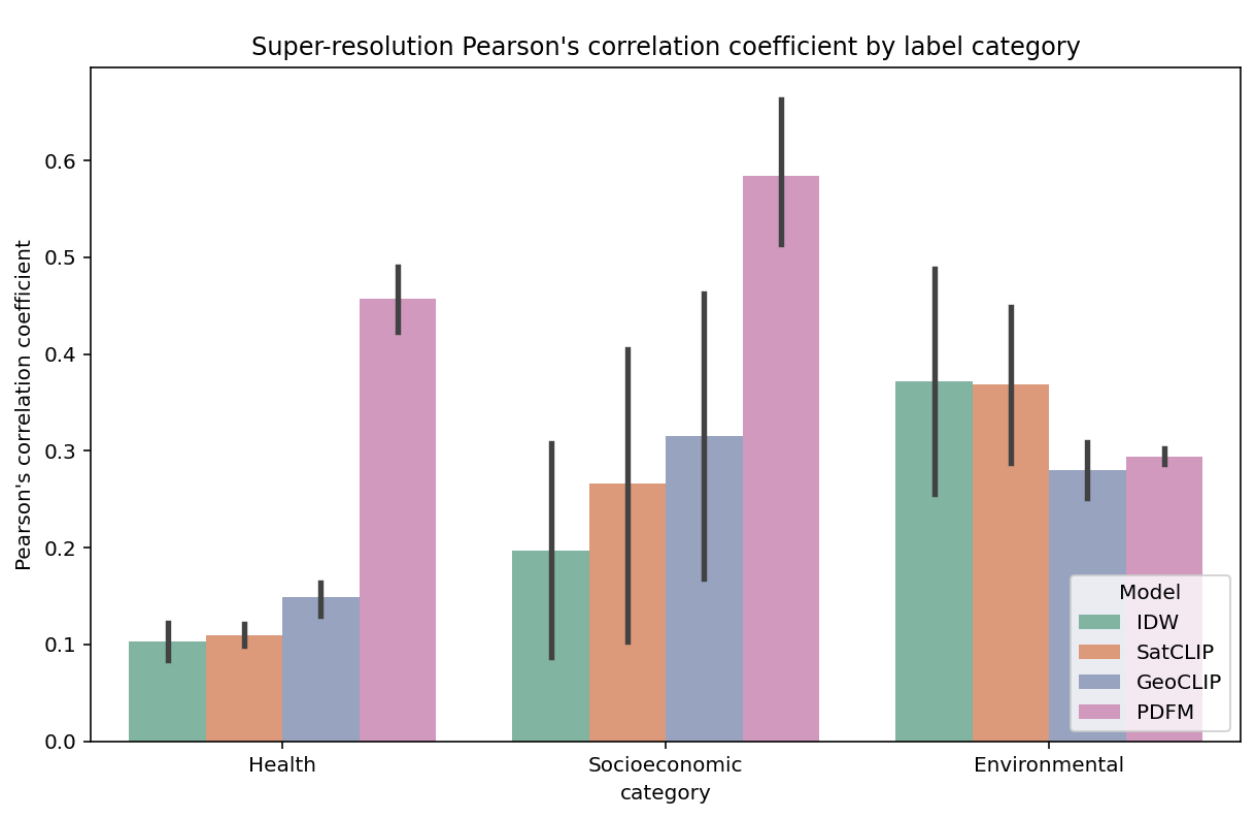

Spatial autocorrelation

A funny example: Inverse-distance Weighting (IDW) (1965) beats Google Research’s [1] elevation predictions:

General Geospatial Inference with a Population Dynamics Foundation Model [1]

Measuring spatial autocorrelation

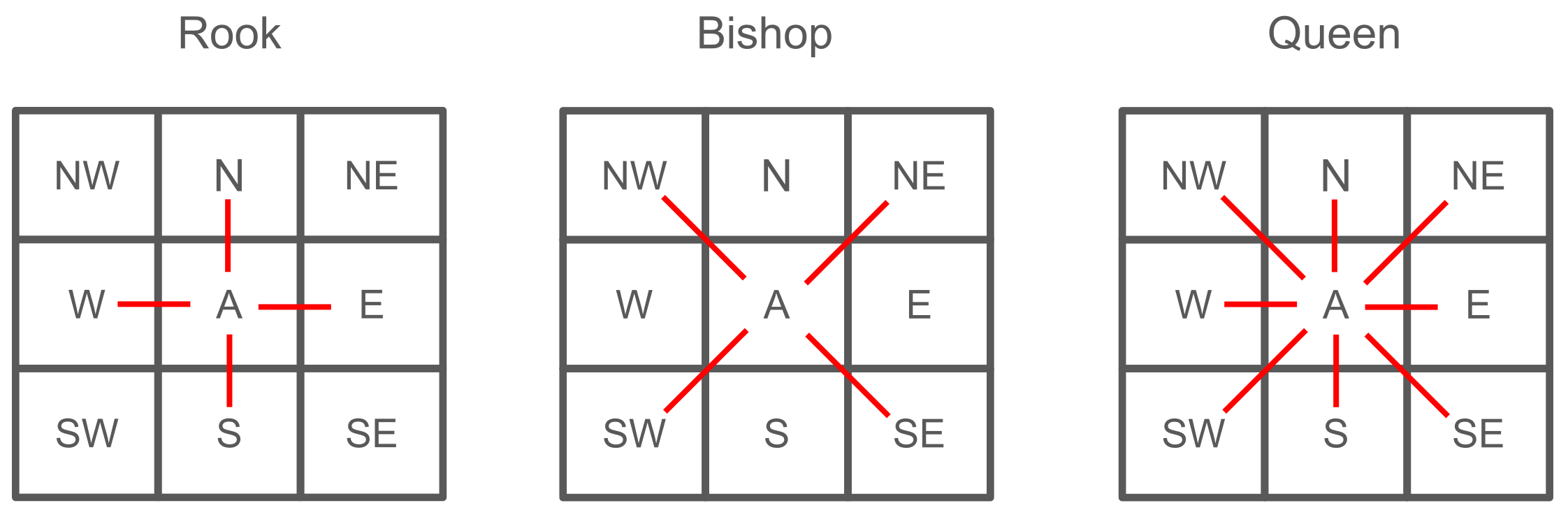

Most spatial statistics depend on a spatial weights matrix \(W\). It encodes which spatial units are considered neighbors.

Formally

- \(w_{ij}\) measures the influence of location \(j\) on location \(i\)

- Usually \(w_{ij} > 0\) if locations \(i\) and \(j\) are neighbors, else \(w_{ij} = 0\)

- Often \(w_{ii} = 0\) (a location does not influence itself)

- Often row-standardized: \(\sum_j w_{ij} = 1\) for all \(i\)

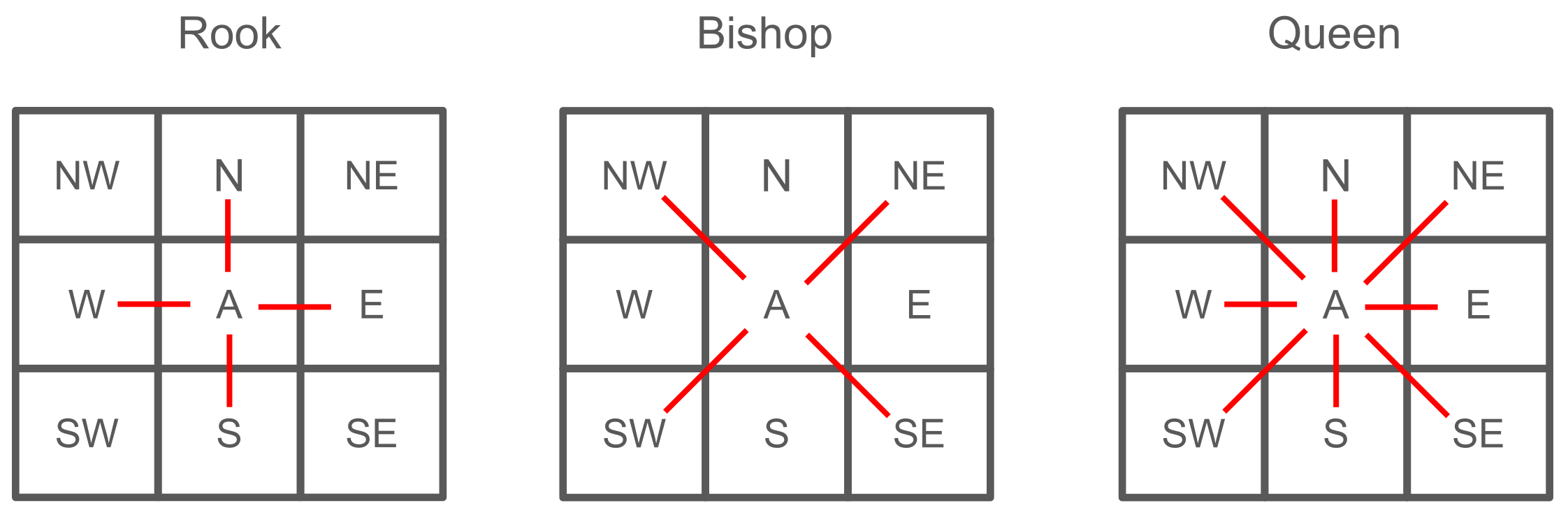

There are many ways to define neighbors. For example these are the “contiguity-based” ones

Measuring spatial autocorrelation

Other methods:

- Distance-based weights: Define neighbors based on a distance threshold or \(k\) nearest neighbors.

- Kernel-based weights: Use a kernel function to assign weights based on distance, with closer neighbors receiving higher weights.

- k-nearest neighbors: Each location is connected to its k closest locations.

- Network-based weights: Define neighbors based on connectivity in a network (e.g., road network, social networks).

Takeaway: Choosing \(W\) is a scientific judgment, not a technical detail.It should be guided by how interaction actually occurs in the urban system you study.

Measuring spatial autocorrelation

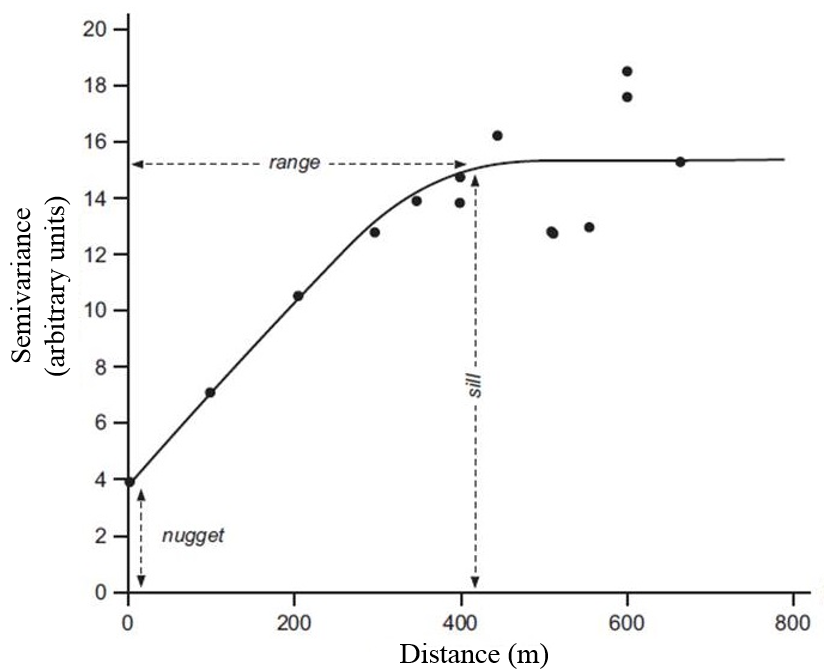

Spatial variogram: how much do two observations vary by distance?

Useful for assessing degree of spatial autocorrelation of continuous spatial variables.

\[\gamma(h) = \frac{1}{2N(h)} \sum_{i=1}^{N(h)} (z(x_i) - z(x_i + h))^2\]

Measuring spatial autocorrelation

Moran’s I

- Global measure of spatial clustering typically ranging from -1 (perfect dispersion) to 1 (perfect clustering).

- Measures how much a value at one location is correlated with values at nearby locations “\(w_{ij}\)”

\[I = \frac{N}{W} \cdot \frac{\sum_{i}^N\sum_{j}^N w_{ij}(x_i - \bar{x})(x_j - \bar{x})}{\sum_{i} (x_i - \bar{x})^2}\]

Where:

- \(N\) = number of spatial units

- \(W\) = sum of all spatial weights \(w_{ij}\)

- \(x_i\), \(x_j\) = values at locations \(i\) and \(j\)

- \(\bar{x}\) = mean of the variable

Measuring spatial autocorrelation

Moran’s I

- Measures how much a value at one location is correlated with values at nearby locations.

Measuring spatial autocorrelation

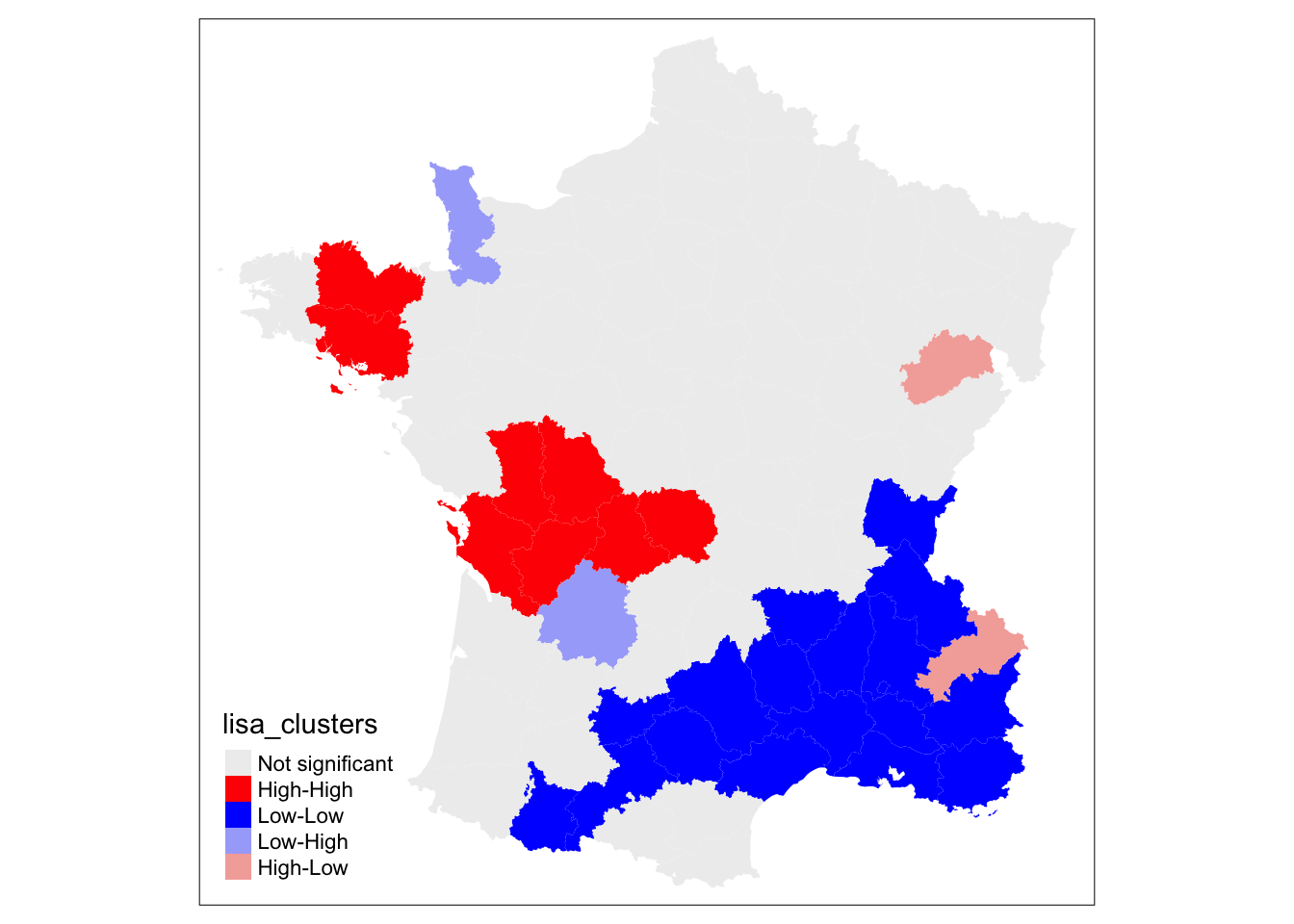

Local Indicators of Spatial Association (LISA)

- Local version of Moran’s I, assigned to each area and compared to an area’s neighbors [2].

- LISA results are expressed as the the value of a spatial variable relative to neighbors and the global mean:

- “High-High” or “Low-Low” (High / Low local value with High / Low values of neighbors - i.e. spatial clusters)

- “Low-High”, “High-Low” (High / Low local value with Low / High values of neighbors - i.e. spatial outliers)

Spatial nonstationarity

Another feature of spatial data: statistical relationships can vary across space

There are multiple techiques to address spatial autocorrelation and nonstationarity:

Geographically weighted regression:

- Estimates local regression coefficients, giving greater weight to nearby observations

Fixed effects models:

We used one last week!

Used to handle unobserved location-specific variation that impacts dependent variables. Only allows interpretation of within-unit effects.

More on GWR and spatially-aware statistical inference next week!

Warning: Edge / Boundary Effects

Most geostatistical analysis happens within a constrained spatial boundary

For proximity- or adjacency-based statistical methods (like GWR):

- Boundary locations can show spurious statistical relationships because of a lack of neighbors in the adjacency matrices used to parameterize models

Spatial clustering

Spatial clustering techniques account for spatial proximity when defining clusters.

Supports varying cluster density (producing varying size clusters).

Spatial clustering is useful for: dimensionality reduction of spatial features and for detecting spatial outliers.

In practical 5-1: note the difference between K-means clusters and geographically contiguous SKATER clusters (SKATER accounts for spatial proximity).

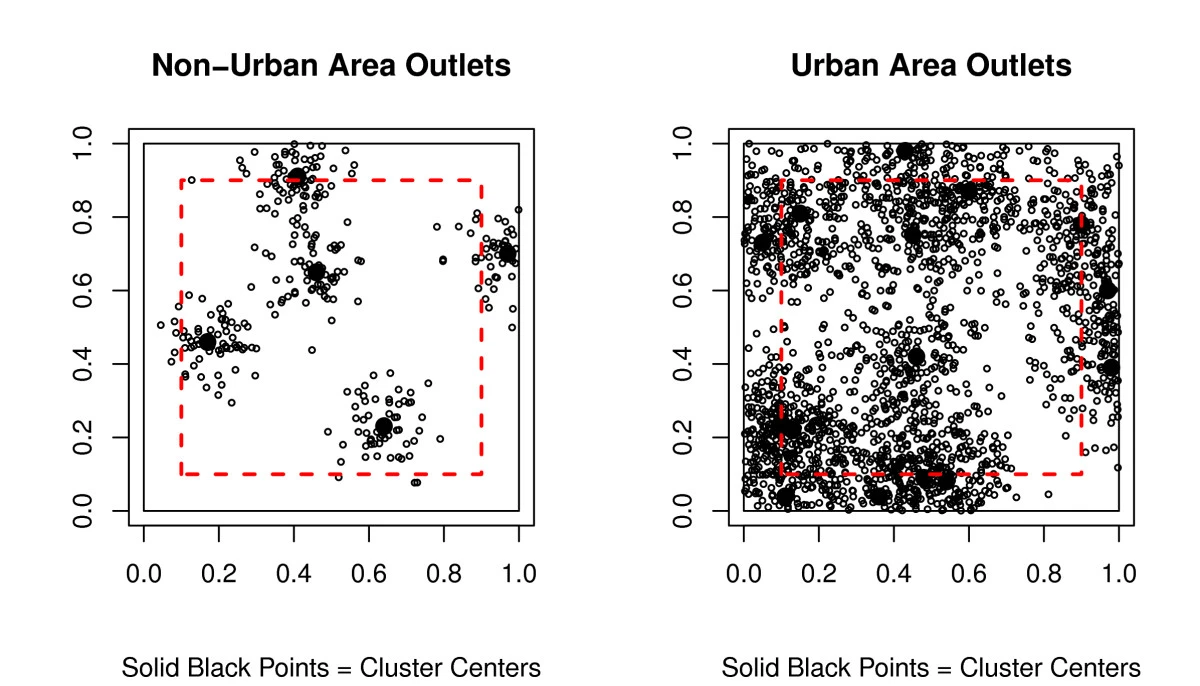

DBSCAN - Density Based Spatial Clustering of Applications with Noise

Spatial clustering algoritms

Most common spatial clustering algorithms:

- DBSCAN [3] [4]

- Defined by two variables:

- \(minPts\): Minimum density of points defining core points (cluster centers).

- \(\varepsilon\) Maximum distance required to define border points connected to core points with neighbors \(< minPts\).

- Outliers are \(> \varepsilon\) distance from core points.

For \(minPts = 4\), \(\varepsilon\) indicated by circle radius. Red: core points, Yellow: border points, Blue: outlier.

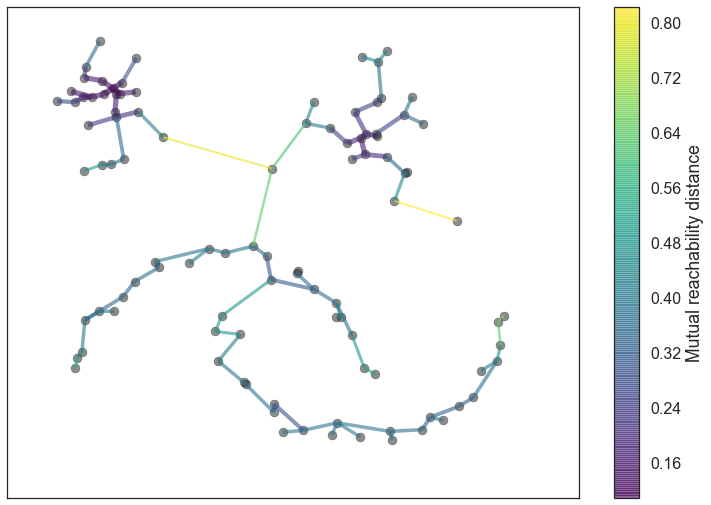

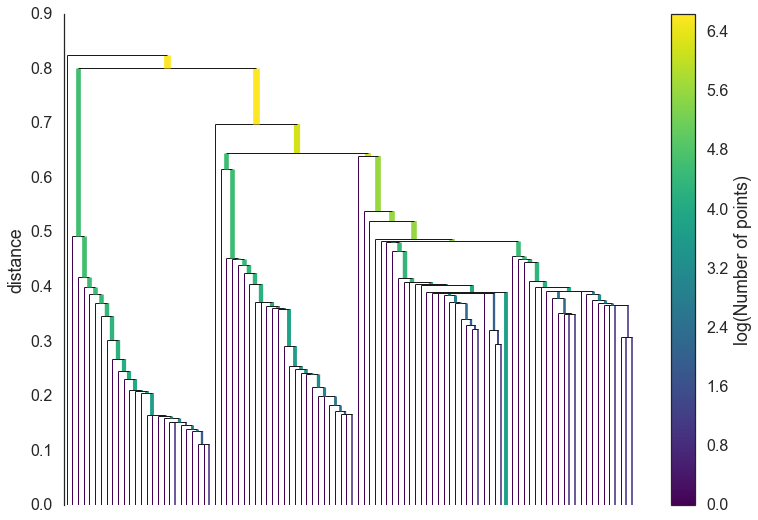

Spatial clustering algoritms

- HDBSCAN

- Replaces a single \(\varepsilon\) for a range of distance values defined by a minimum spanning tree of mutual reachability between each data point and its \(minPts\) nearest neighbors.

Geodemographic analysis

Geodemographic analysis groups spatial units (e.g., tracts, neighborhoods, CBGs) based on similar demographic characteristics, often while encouraging spatial coherence [5], [6], [7].

Typical inputs:

- Income, education, employment, housing characteristics from the census.

- Mobility patterns from GPS data.

- Amenity visitation patterns from social media data.

Typical outputs:

- Neighborhood “types” or “profiles”

- Maps of socioeconomic structure

- A reduced representation of high-dimensional census data

Key distinction from point clustering: We are clustering attributes of places, not locations of points. Spatial proximity is often encouraged, but not required

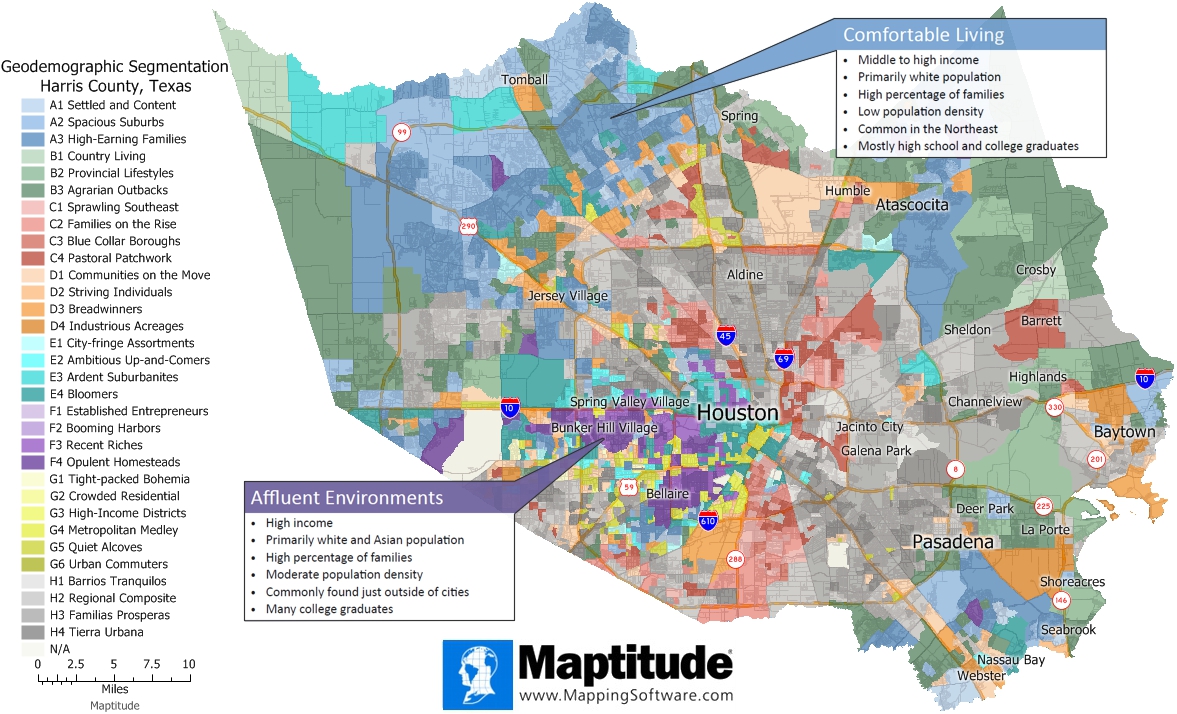

Geodemographic analysis

From Maptitude

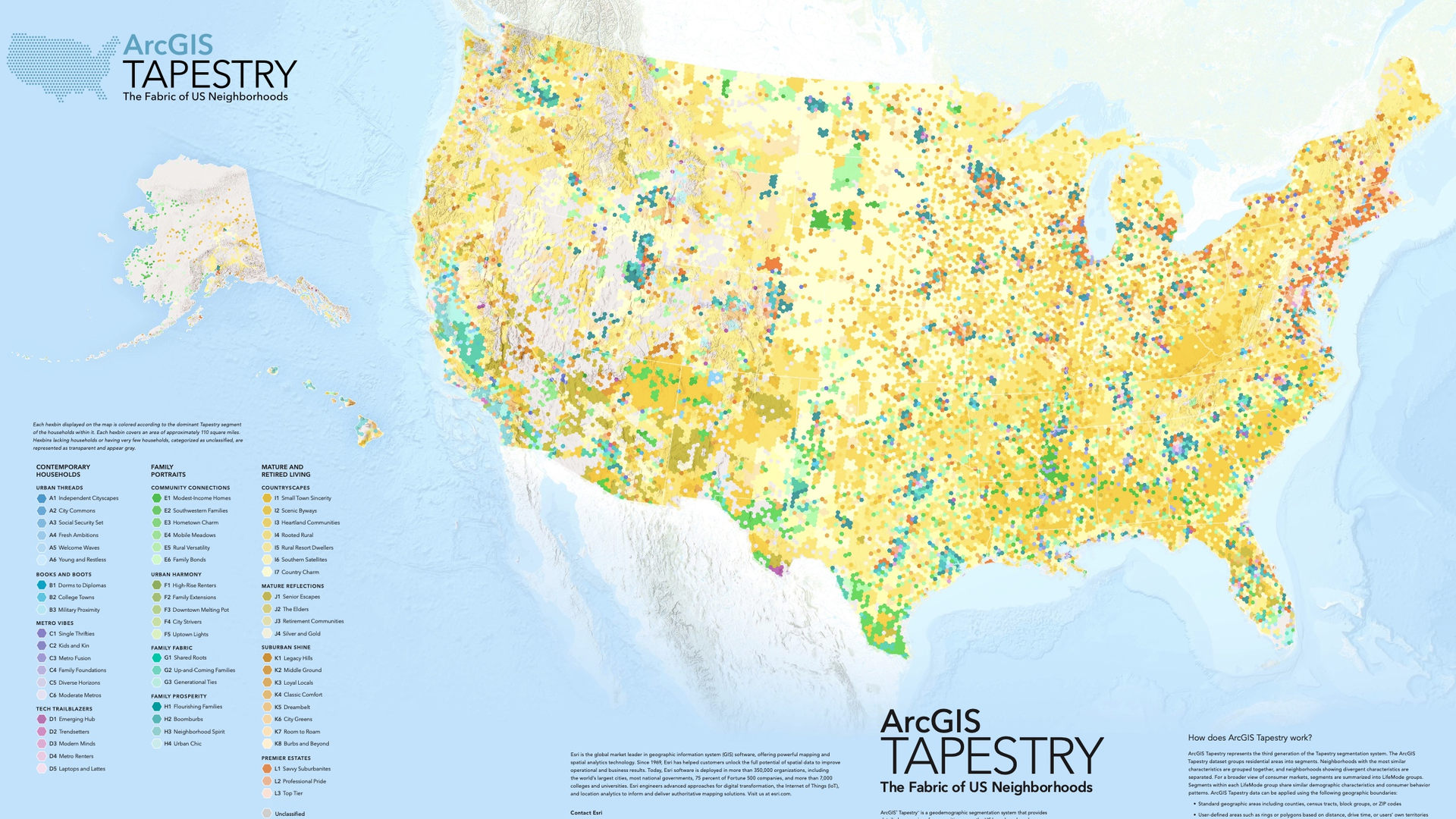

Geodemographic analysis

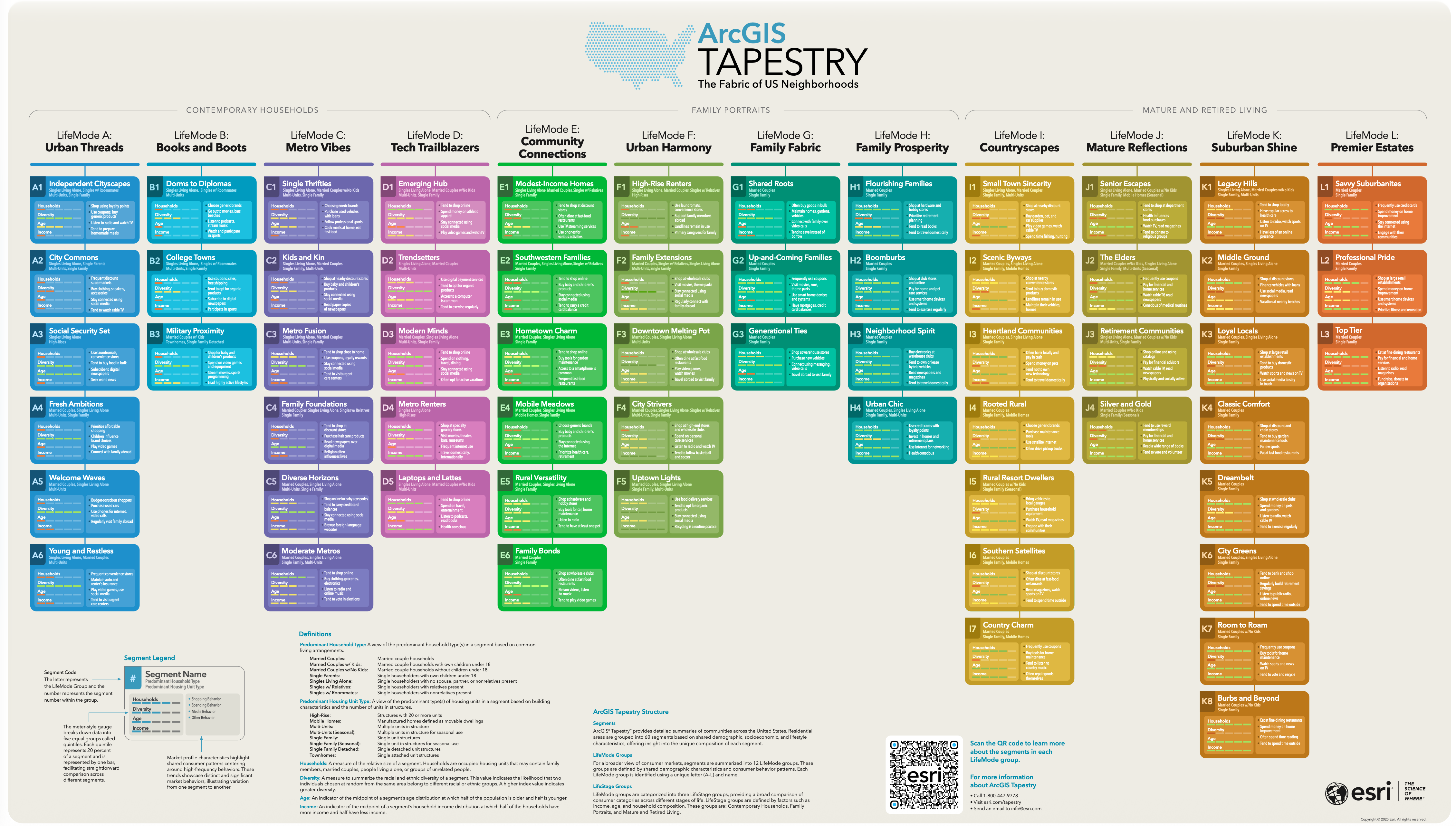

From ESRI Tapestry

Geodemographic analysis

From ESRI Tapestry

Geodemographic analysis

How does it work: combining feature similarity + spatial structure

Two common approches:

- Feature-only clustering: Clusters are defined solely based on similarity of demographic features, without considering spatial proximity. This can lead to non-contiguous clusters.

- Spatially-constrained clustering: Clusters are defined based on both feature similarity and spatial proximity, often using algorithms that encourage spatial contiguity (e.g., SKATER, REDCAP).

Geodemographic clusters are low-dimensional spatial representations of data. As such, they models of the data, and should be evaluated as such. They are not “ground truth” representations of urban structure.

References

CUS 2025, ©SUNLab group